Text Feature Extraction is the process of transforming text into numerical feature vectors to be used by Machine Learning Algorithms. This blog will focus on two different ways in which text can be transformed into numerical values by using Python's Scikit-Learn library. Text documents or corpus cannot be directly fed into Machine Learning Algorithms because these algorithms expect data input that are numerical feature vectors or matrices. There are many ways of converting a corpus to a feature vector such as Bag of Words and TF-IDF Weighting. Before digging deeper let's first define some important terminology in the area of Text Feature Extraction.

IMPORTANT TERMINOLOGY:

Corpus:- This is a collection of all documents or text, usually stored as a comma separated list of strings.

Document:- This represents each element in the corpus and it is a piece of text of any length.

Token:- A document or text usually first needs to be broken down into small chunks referred to as tokens or words. This process is called tokenization and on its own can be a rather comprehensive topic as there are multiple strategies for tokenization that takes into account delimeters, n-grams and regular expressions.

Count/Frequency:- The number of times a token occurs in a text document represents that token's frequency within the document.

Feature:- Each individual token identified across the entire corpus is treated as a feature with a unique ID in the final feature vector. Keep in mind that there is no particular ordering to the features in a vector.

Feature Vector:- An vector representation of a corpus where each row represents a document and the columns represent the tokens found across the entire corpus.

Vectorization:- This is the process of converting a corpus or collection of text documents into a numerical feature vector where the columns represent the tokens found in the entire corpus and each row represents a document.

VECTORIZATION STRATEGIES:

1. Bag of Words:

This is a Vectorization strategy that involves tokenization, frequency counting and normalization. Take a corpus of text documents. Tokenize each document using a chosen tokenization strategy. Give each token over the entire corpus an integer ID. Use these integer IDs as feature names in the resulting feature vector. Keep in mind that word ordering is not preserved. For each document in the corpus, count the frequency of each token and store these values in the corresponding feature ID column and document row. When this is done, the resulting matrix will contain a row for each text document and a column for each token (storing frequency) over the entire corpus. Lastly, perform some type of normalization (a diminishing importance weighting) for tokens that occur in the majority of text documents.

Scikit-Learn's CountVectorizer class can be used to implement the Bag of Words vectorization strategy. CountVectorizer contains many parameters. Some important ones are:

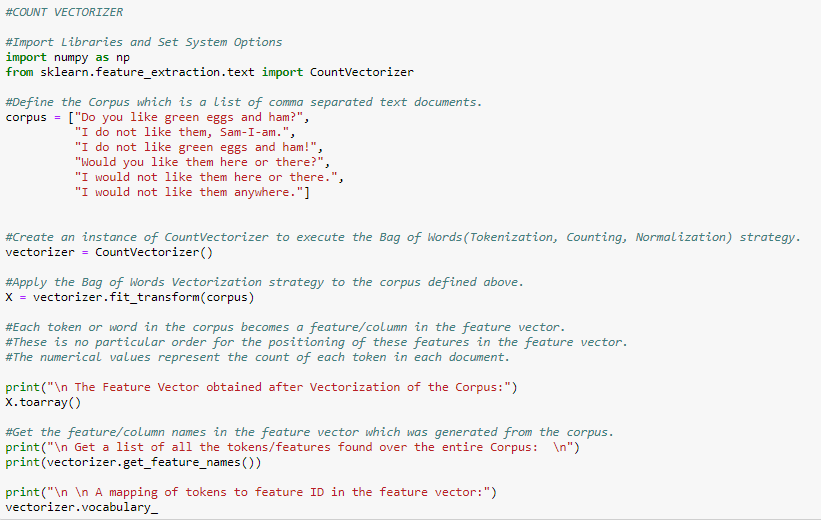

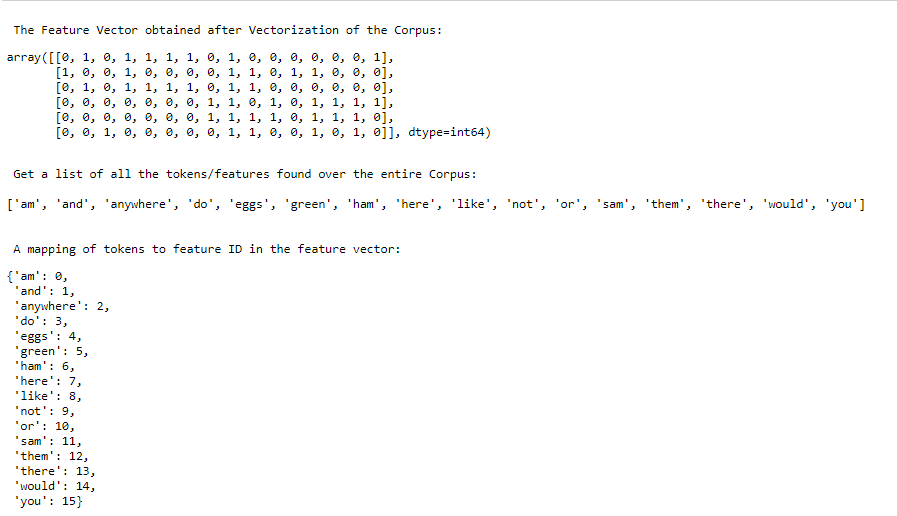

Visit the Scikit-Learn website for a more in depth explanation of the roles of these parameters. The following python code is an illustration of Bag of Words using CountVectorizer from python's Scikit-Learn library.

1. Bag of Words:

This is a Vectorization strategy that involves tokenization, frequency counting and normalization. Take a corpus of text documents. Tokenize each document using a chosen tokenization strategy. Give each token over the entire corpus an integer ID. Use these integer IDs as feature names in the resulting feature vector. Keep in mind that word ordering is not preserved. For each document in the corpus, count the frequency of each token and store these values in the corresponding feature ID column and document row. When this is done, the resulting matrix will contain a row for each text document and a column for each token (storing frequency) over the entire corpus. Lastly, perform some type of normalization (a diminishing importance weighting) for tokens that occur in the majority of text documents.

Scikit-Learn's CountVectorizer class can be used to implement the Bag of Words vectorization strategy. CountVectorizer contains many parameters. Some important ones are:

- lowercase

- max_df

- min_df

- ngram_range

- stop_words

- strip_accents

- token_pattern

- tokenizer

- max_features.

Visit the Scikit-Learn website for a more in depth explanation of the roles of these parameters. The following python code is an illustration of Bag of Words using CountVectorizer from python's Scikit-Learn library.

2. TF-IDF Weighting

TF-IDF weighting ensures that the entire corpus is considered when computing the frequency of each token. To handle the problem of frequent, uninformative tokens vs rare, informative tokens, TF-IDF weighting can be used and applied to each token. If a word is occurring too frequently in a document or corpus, chances are it will not add much value to the analysis. TF-IDF weighting diminishes the weight of terms that occur very frequently and increases the weight of terms that occur rarely.

Some parameters of Scikit-Learn's TF-IDF Vectorizer worth mentioning are:

norm: - This parameter controls whether the vector is normalized or not and the choice of normalization (L1 or L2). Keep in mind that if the vector is to be used by a classifier then the vector should be normalized.

use_idf:- This parameter controls whether to enable inverse-document-frequency (IDF) reweighting.

smooth_idf:- When True is assigned to this parameter, a value of "1" is added to document frequencies as if an extra document was seen containing every term in the collection exactly once which prevents zero divisions.

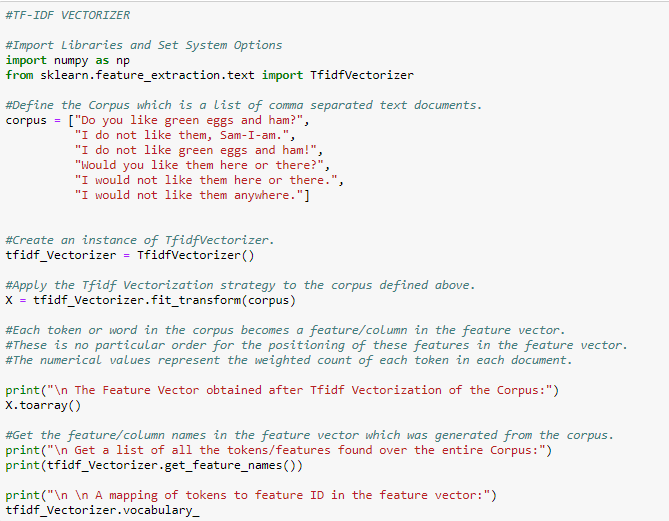

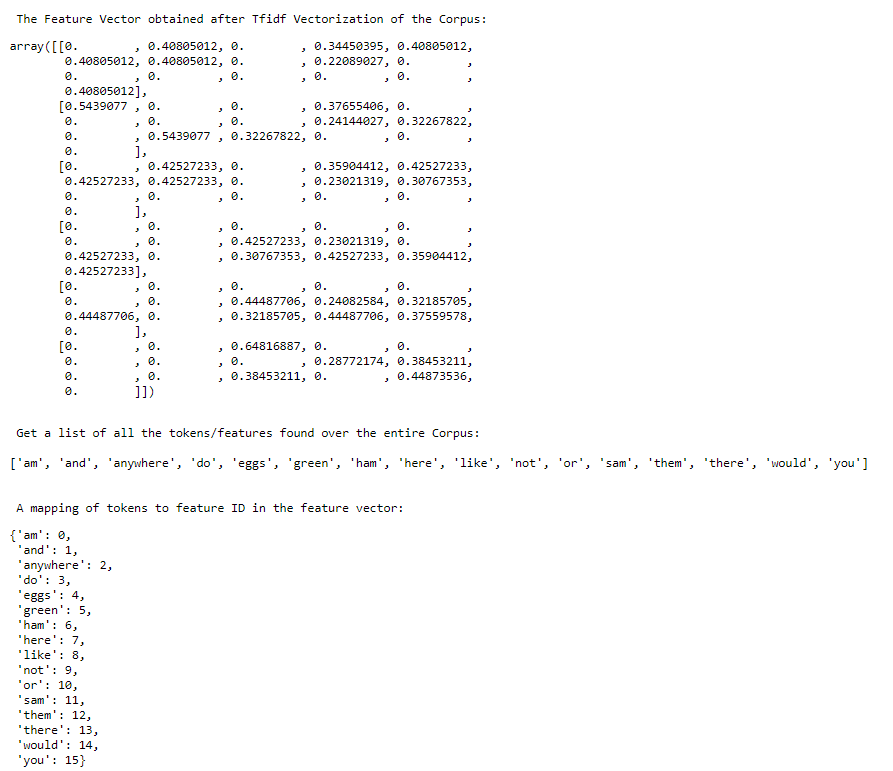

The following python code shows how Scikit-Learn's TF-IDF Vectorizer can be used.

CONCLUSION:

Text Feature Extraction is an important step in Natural Language Processing. It is a strategy for transforming text into numerical feature vectors to be used by Machine Learning Algorithms. Bag of Words and TF-IDF Vectorization are two well known examples. It is up to the Data Scientist to pick the strategy that best suits the given problem statement.

Happy Learning!

RSS Feed

RSS Feed